Image compositor for android

2020-06-07This is an idea I want to work on.

One really cool tool on Blender - the 3D editor is the compositor

The compositor is a pipeline for manipulating things like textures, normals, vectors and all other sorts of values.

One way to use it is to apply trasnformations to the final resulting image after it was already rendered.

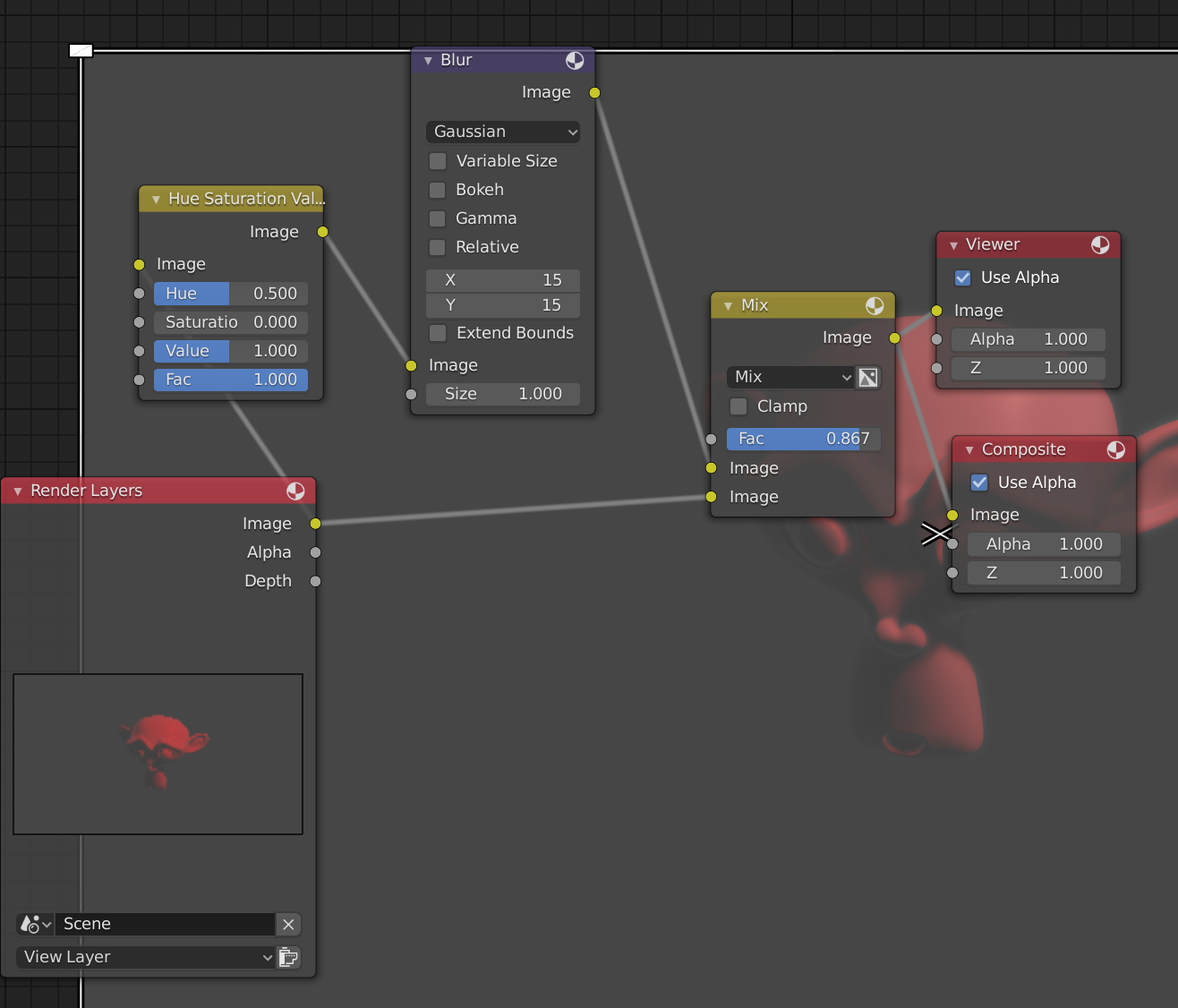

For example, say you want to take the final render, blur it and remix it again with the render, you would have something like this:

There are some advantages on creating a pipeline instead of applying the transformations with an editor.

- Changes are non-destructive

- The pipeline can be reused

Also a visual editor is really fitting for this kind of solution.

Now for the second part of the idea: another really, really cool piece of tech is style transfer.

Style transfer is a technique that uses machine learning to apply a style to an image. One app that does this, for example, is the Prisma photo editor

Applying different transfer in succession can lead to some interesting results.

Although Prisma is an app available for android, there is nothing similar to the blender compositor available in an app form.

Combining those two ideas we could have a compositing app with some nodes on the pipeline that actually do the processing on some server.

Those nodes could do style transfer.

Not only that, with these transformations being done on the server we could have nodes that select faces, objects or maybe redirects the flow depending on the content of the image.

Since the pipeline are just descriptions of the nodes and the parameters, it would be also trivial to save them and share them.

A nice term for refering the pipelines can be filters, as editing the pipeline is not really necessary for a casual user, and the term is already coined.

I started experimenting with the idea here